rational

Issue #225: Full List

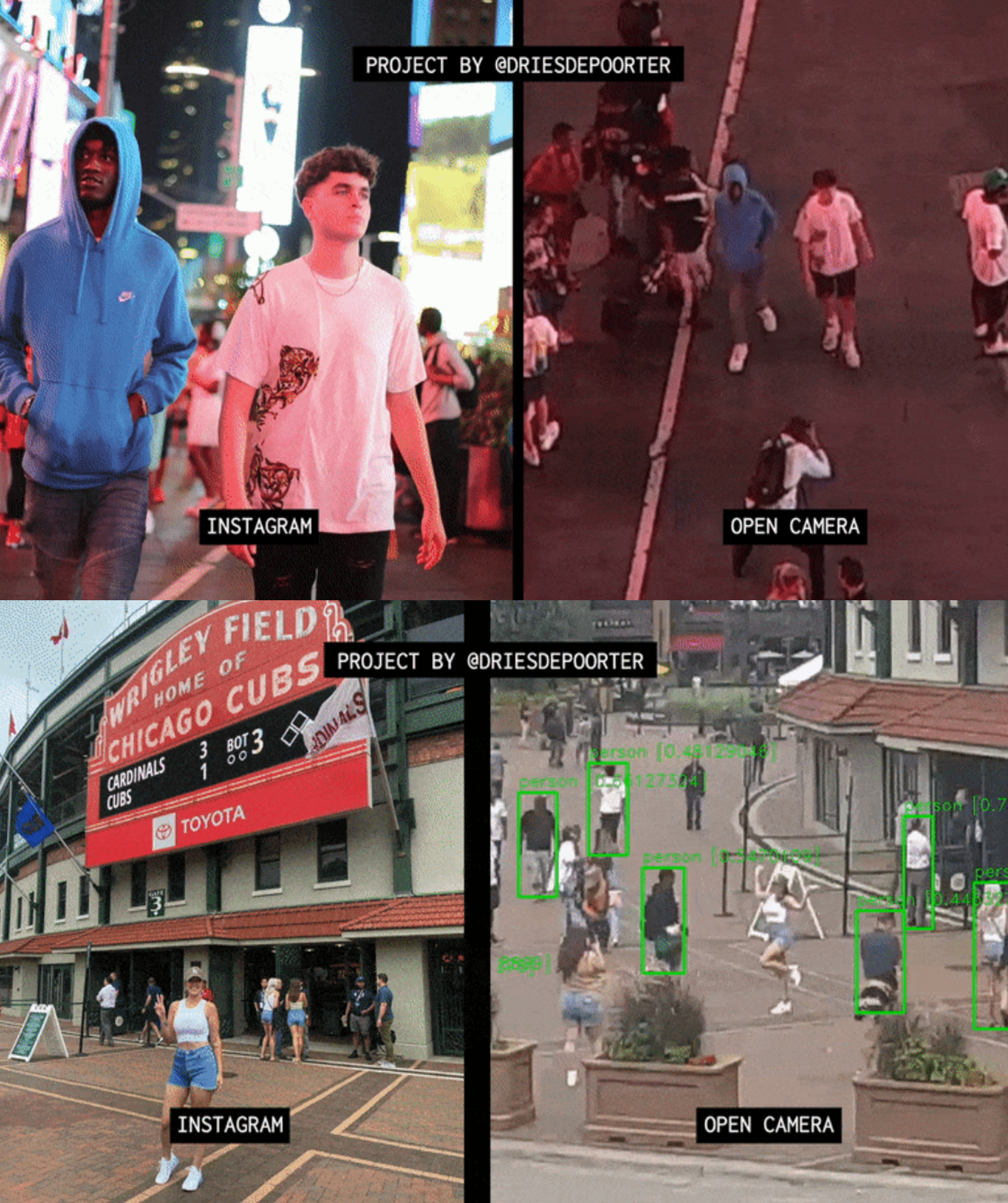

14 August, 2022 // View curated list'The Follower' is a software that searches how an Instagram photo was taken with the help of AI and open cameras. // driesdepoorter.be

# Instrumental

How and why to turn everything into audio // ea247, 5 min

Prependix: Building a Bugs List prompts // CFAR 2017, 1 min

# Epistemic

Introducing Pastcasting: A tool for forecasting practice // aaron-ho-1, 1 min

Paper reading as a Cargo Cult // jem-mosig, 5 min

Proposal: Consider not using distance-direction-dimension words in abstract discussions // moridinamael, 5 min

Argument by Intellectual Ordeal // lc, 6 min

What is an agent in reductionist materialism? // Valentine, 1 min

Seeking PCK (Pedagogical Content Knowledge) // CFAR 2017, 5 min

Appendix: Jargon Dictionary // CFAR 2017, 25 min

Appendix: Hamming Questions // CFAR 2017, 2 min

Will Nonbelievers Really Believe Anything? // Scott Alexander, 3 min

# Ai

DeepMind alignment team opinions on AGI ruin arguments // Vika, 16 min

Language models seem to be much better than humans at next-token prediction // Buck, 17 min

Oversight Misses 100% of Thoughts The AI Does Not Think // johnswentworth, 1 min

How To Go From Interpretability To Alignment: Just Retarget The Search // johnswentworth, 3 min

Interpretability/Tool-ness/Alignment/Corrigibility are not Composable // johnswentworth, 3 min

Jack Clark on the realities of AI policy // Kaj_Sotala, 3 min

Seriously, what goes wrong with "reward the agent when it makes you smile"? // TurnTrout, 2 min

How (not) to choose a research project // D0TheMath, 8 min

Refining the sharp left turn threat model // Vika, 3 min

I missed the crux of the alignment problem the whole time // zeshen, 4 min

Anti-squatted AI x-risk domains index // ete, 1 min

The Dumbest Possible Gets There First // Artaxerxes, 2 min

Encultured AI Pre-planning, Part 1: Enabling New Benchmarks // Andrew_Critch, 6 min

How Do We Align an AGI Without Getting Socially Engineered? (Hint: Box It) // Peter S. Park, 13 min

The alignment problem from a deep learning perspective // ricraz, 31 min

Against Relying on Evolution to Forecast AI Outcomes (Part 1) // quintin-pope, 9 min

Refine's First Blog Post Day // adamShimi, 1 min

Encultured AI, Part 1: Enabling New Benchmarks // Andrew_Critch, 6 min

Gradient descent doesn't select for inner search // ivan-vendrov, 5 min

Cultivating Valiance // DarkSym, 4 min

How I think about alignment // Linda Linsefors, 6 min

How much alignment data will we need in the long run? // Jacob_Hilton, 4 min

Team Shard Status Report // David Udell, 3 min

Can we get full audio for Eliezer's conversation with Sam Harris? // jskatt, 1 min

An extended rocket alignment analogy // remember, 5 min

Steelmining via Analogy // paulbricman, 2 min

Emergent Abilities of Large Language Models [Linkpost] // Aidan O'Gara, 1 min

Shapes of Mind and Pluralism in Alignment // adamShimi, 2 min

the Insulated Goal-Program idea // carado-1, 1 min

goal-program bricks // carado-1, 2 min

My summary of the alignment problem // peter-hrosso, 1 min

Complexity No Bar to AI (Or, why Computational Complexity doesn't matter for real life problems) // sharmake-farah, 3 min

Project proposal: Testing the IBP definition of agent // jeremy-gillen, 3 min

An Uncanny Prison // Nathan1123, 2 min

Dissected boxed AI // Nathan1123, 1 min

Thoughts on the good regulator theorem // JonasMoss, 5 min

Broad Basins and Data Compression // jeremy-gillen, 8 min

Disagreements about Alignment: Why, and how, we should try to solve them // ojorgensen, 19 min

Inner search processes are not compute-efficient // ivan-vendrov, 5 min

The OpenAI playground for GPT-3 is a terrible interface. Is there any great local (or web) app for exploring/learning with language models? // avivo, 1 min

Encultured AI Pre-planning, Part 2: Providing a Service // Andrew_Critch, 3 min

Timelines explanation post part 1 of ? // nathan-helm-burger, 2 min

Many Gods refutation and Instrumental Goals. (Proper one) // aditya-malik, 1 min

Is it possible to find venture capital for AI research org with strong safety focus? // AnonResearch, 1 min

How Deadly Will Roughly-Human-Level AGI Be? // David Udell, 1 min

A little playing around with Blenderbot3 // nathan-helm-burger, 1 min

Encultured AI, Part 1 Appendix: Relevant Research Examples // Andrew_Critch, 7 min

Infant AI Scenario // Nathan1123, 4 min

Artificial intelligence wireheading // Big Tony, 1 min

Formalizing Alignment // Marv K, 2 min

How would two superintelligent AIs interact, if they are unaligned with each other? // Nathan1123, 1 min

Why Not Slow AI Progress? // Scott Alexander, 11 min

# Meta-ethics

Lamentations, Gaza and Empathy // yair-halberstadt, 3 min

What kind of moral framework would you spread if you could decide? // NinaR, 1 min

Moral Progress Is Not Like STEM Progress // Robin Hanson, 4 min

# Decision theory

Most Ivy-smart students aren't at Ivy-tier schools // aaronb50, 9 min

How to bet against civilizational adequacy? // Wei_Dai, 1 min

What are some good arguments against building new nuclear power plants? // RomanS, 1 min

Are ya winning, son? // Nathan1123, 2 min

Do advancements in Decision Theory point towards moral absolutism? // Nathan1123, 4 min

How would Logical Decision Theories address the Psychopath Button? // Nathan1123, 1 min

Perfect Predictors // aditya-malik, 1 min

# Math and cs

What is estimational programming? Squiggle in context // quinn-dougherty, 8 min

# Books

Your Book Review: God Emperor Of Dune // Scott Alexander, 20 min

# Community

Troll Timers // Screwtape, 3 min

Dissent Collusion // Screwtape, 3 min

# Culture war

A Cyclic Theory Of Subcultures // Scott Alexander, 8 min

# Misc

The lessons of Xanadu // jasoncrawford, 9 min

All the posts I will never write // Self-Embedded Agent, 8 min

Steganography in Chain of Thought Reasoning // alex-ray, 6 min

The Medium Is The Bandage // party-girl, 11 min

Content generation. Where do we draw the line? // Q Home, 2 min

Dissolve: The Petty Crimes of Blaise Pascal // JohnBuridan, 7 min

What are some Works that might be useful but are difficult, so forgotten? // TekhneMakre, 1 min

Expected (Social) Value // algrthms, 3 min

Do meta-memes and meta-antimemes exist? e.g. 'The map is not the territory' is also a map // M. Y. Zuo, 1 min

Narrative Slipstream Effects // Venkatesh Rao, 14 min

Violent Offense Under Bounties & Vouchers // Robin Hanson, 3 min

A Portrait of Civil Servants // Robin Hanson, 1 min

# Podcasts

Radio Bostrom: Audio narrations of papers by Nick Bostrom // PeterH, 2 min

#135 – Samuel Charap on key lessons from five months of war in Ukraine // , 54 min

c41: Script Draft 1, beats 15-17 // Constellation, 22 min

168 – Unions Are Governments // The Bayesian Conspiracy, 161 min

# Rational fiction

A sufficiently paranoid paperclip maximizer // RomanS, 2 min

The Host Minds of HBO's Westworld. // Nerret, 3 min

# Videos of the week

AI stalks influencers in the wild to expose horrors of high-tech surveillance // b 00, 1 min